Abstract

The recent trend of emerging high-quality Augmented Reality (AR) glasses offered the possibility for visually exciting application scenarios. However, the interaction with these devices is often challenging since current input methods most of the time lack haptic feedback and are limited in their user interface controls. With this work, we introduce CHARM, a combination of a belt-worn interaction device, utilizing a retractable cord, and a set of interaction techniques to enhance AR input capabilities with physical controls and spatial constraints. Building on our previous research, we created a fully-functional prototype to investigate how body-worn string devices can be used to support generic AR tasks. We contribute a radial widget menu for system control as well as transformation techniques for 3D object manipulation. To validate our interaction concepts for system control, we implemented a mid-air gesture interface as a baseline and evaluated our prototype in two formative user studies. Our results show that our approach provides flexibility regarding possible interaction mappings and was preferred for manipulation tasks compared to mid-air gesture input.

Publication

@proceedings{Klamka2019b,

author = {Konstantin Klamka and Patrick Reipschl\"{a}ger and Raimund Dachselt},

title = {CHARM: Cord-based Haptic Augmented Reality Manipulation},

booktitle = {21st International Conference on Human-Computer Interaction - Virtual, Augmented and Mixed Reality. Multimodal Interaction},

series = {Lecture Notes in Computer Science (11574) - Virtual, Augmented and Mixed Reality: Multimodal Interaction},

volume = {9},

year = {2019},

month = {7},

isbn = {978-3-030-21607-8},

location = {Orlando, Florida, USA},

pages = {96--114},

numpages = {19},

doi = {10.1007/978-3-030-21607-8_8},

url = {https://doi.org/10.1007/978-3-030-21607-8_8},

publisher = {Springer International Publishing},

address = {Cham},

keywords = {Augmented Reality; Haptic Feedback; Elastic Input; Cord Input; Radial Menu; 3D Interaction; 3D Transformation; Wearable Computing.}

}List of additional material

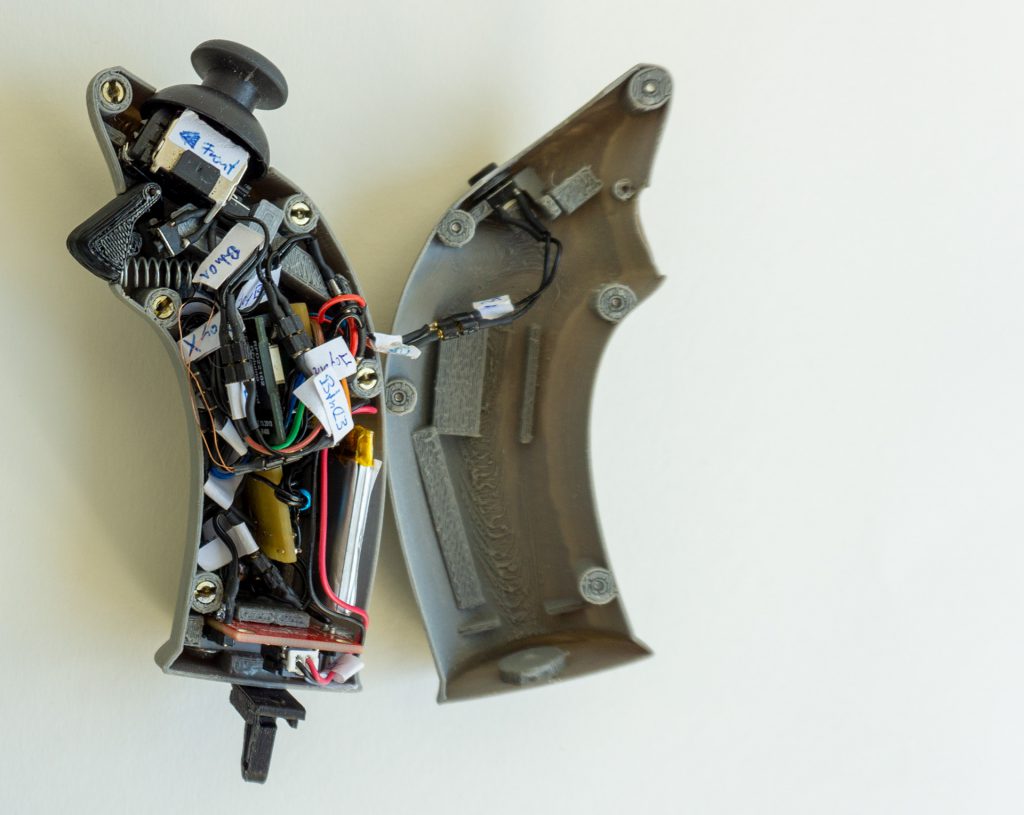

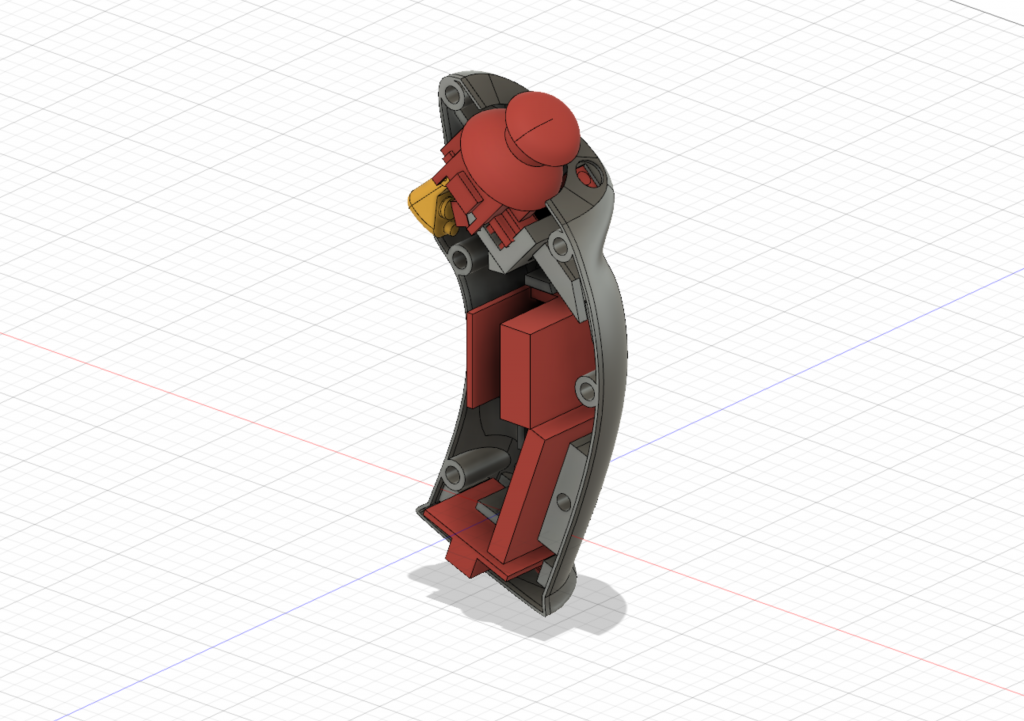

CHARM Handle

We developed a CHARM handle that can be seamlessly clipped to our belt-worn CHARM controller. The handle provides versatile I/O capabilities including a two-axis thumb-joystick, a trigger and two additional selection buttons, vibro-tactile feedback and native wireless Bluetooth Low Energy connection to the Microsoft HoloLens.

In the following sections, we will provide detailed construction details. Therefore, we first list all hardware components and afterwards present all necessary 3D-printed parts.

CHARM Handle Parts

In the following, we will list all components that we used to create our CHARM handle device.

| Image | Part | Description |  |

Jumper wire Yv, 1 x 0.20 mm², Black |

We used isolated standard jumper wire to hook up all sensors and output channels. However, there are not special requirements for the wires. Basically, you can use nearly every isolated cable. |

|---|---|---|

|

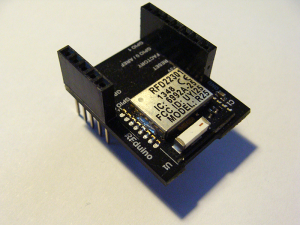

RFduino | For our handle controller, we used the RFduino microcontroller which basically consists of a Nordic Semiconductor nRF51822 chip with a Arduino-compatible bootloader. For rapid prototyping, we decided to use the bigger version with 0.1″ pin breakouts. However, for further miniaturizations a smaller SMD version of the microcontroller (size of a finger-tip) is still available. |

|

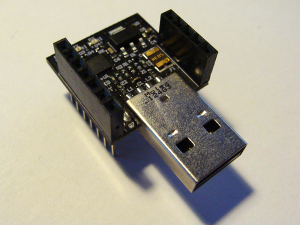

FTDI Programmer | In order to upload new firmware to our research platform, we used this tiny FTDI programmer that can be directly stacked RFduino. However, every other 3.3V FTPI programmer works as well. You have simply to hook up the following pins: receive pin (RX, 0), transmit pin (TX, 1), reset pin, ground and +3V. |

|

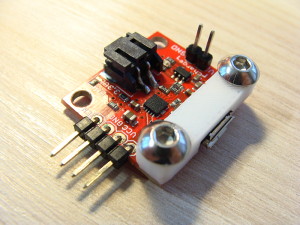

Thumb-Joystick | In order to integrate a small thumb-joystick, we used a two-axis joystick with two potentiometers and an additional push-button. |

|

Springs | We used a spring to realize the trigger button. |

|

Access to 3D-Printer | In order to 3D print all construction parts you will need access to basic 3D-Printer. |

|

Screws and Nuts | We used several metric screws and nuts to fix all 3D printed parts and electronics. |

|

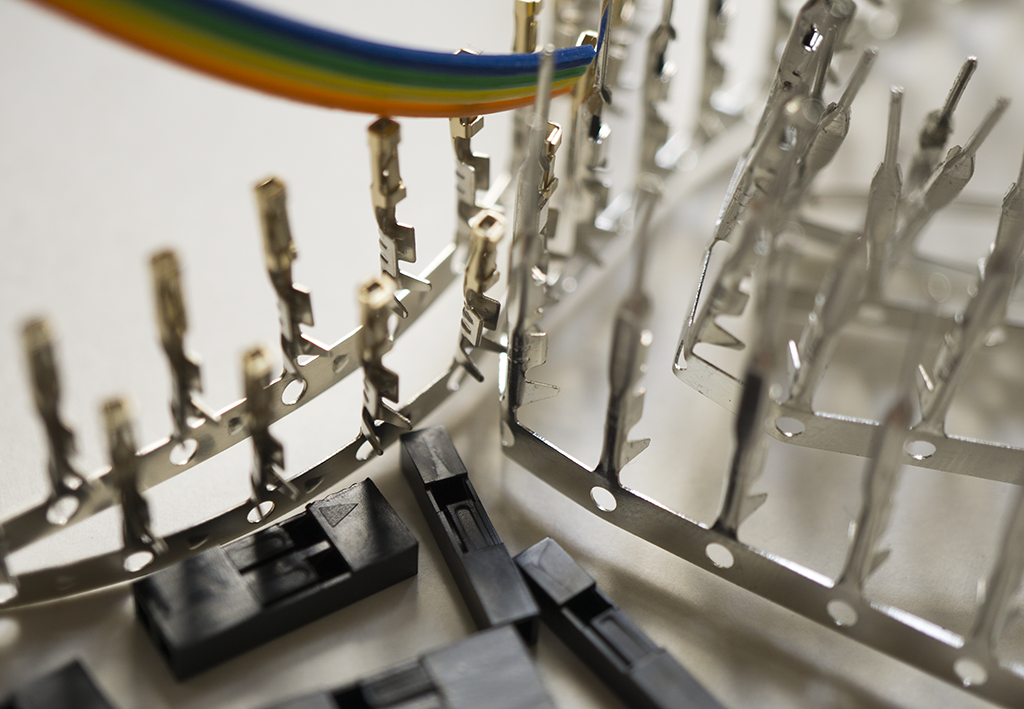

Cable, Crimp Contacts and Housings | We used several standard pin headers to design our CHARM handle device as flexible as possible without hardwiring all components. |

|

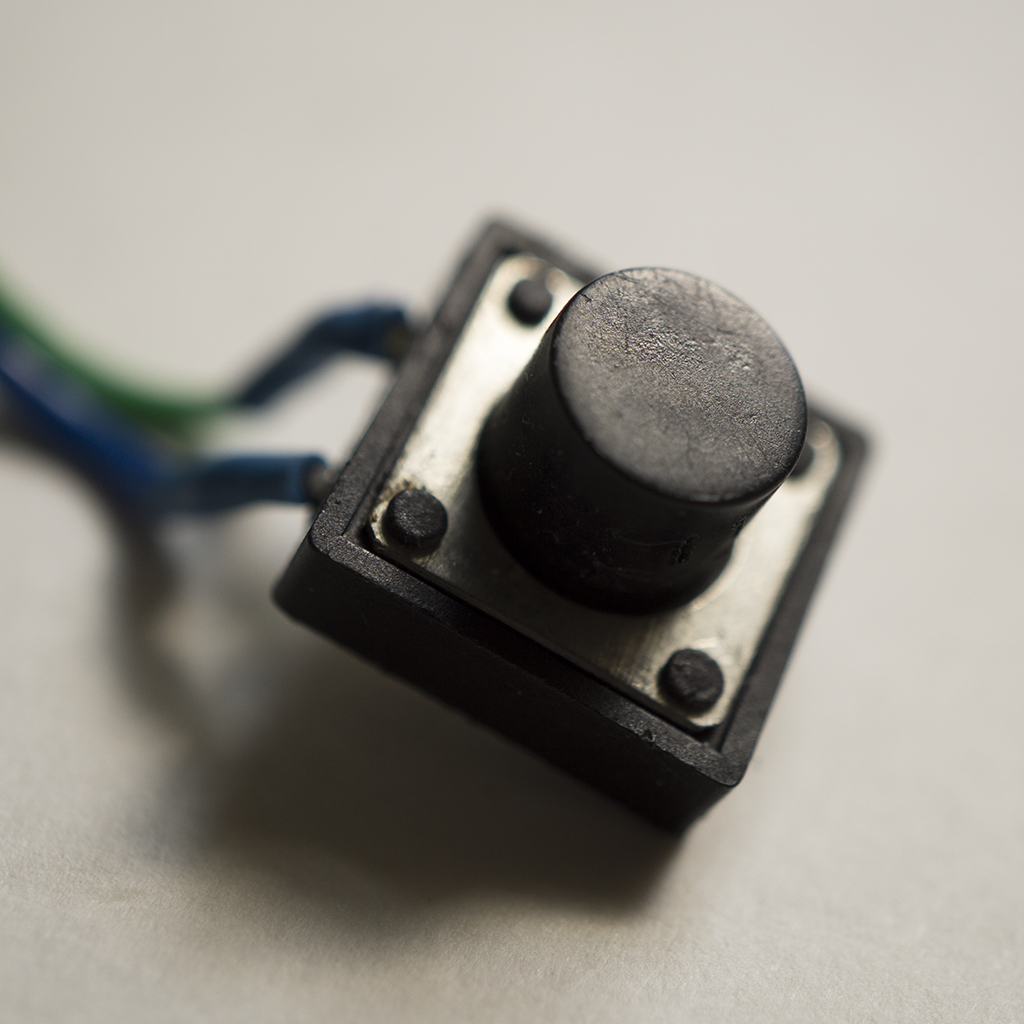

Push-Button | A push-button acts as a trigger for the trigger as well as selection buttons and provide a nice tactile feedback. |

|

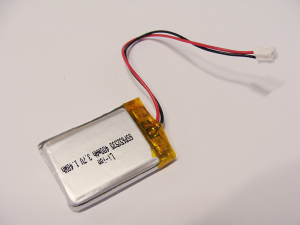

LiPo Accu – 400mAh PRT-10718 | We used a standard 400mAh lithium polymer battery to power the handle. The battery provides electric power to the microprocessor including its associated logic parts. |

|

SparkFun Power Cell – LiPo Charger/Booster PRT-11231 | We used a power management board from Sparkfun with a Microship MCP73831 chip. This allows us, versatile charging capabilities including micro-usb and inductive charging. In order to avoid any damage on the micro-usb port on the board during the development (cf. Sparkfun customer reviews), we strengthen this part with a custom-printed part. |

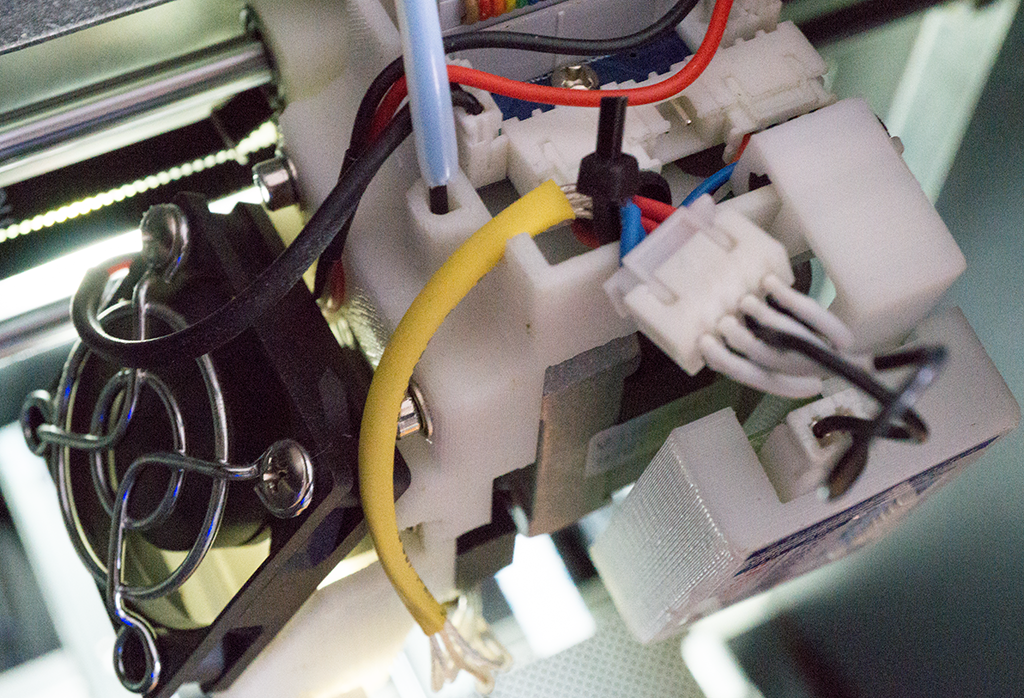

CHARM Handle Housing

Our CHARM controller consists of a 3D-printed housing, a trigger button and two selection buttons. In addition, we integrated a two-axis thumb-joystick with an additional button. In order to organize all hardware components, we designed a housing that fit all parts.

You can download all parts as single STL-files to 3D-print the parts on your own:

Related Student Theses

Comparing Elasticcon and free hand gestures to control innovative menu concepts for AR scenarios

Thomas Schwab July 10th, 2017 until December 18th, 2017

Supervision: Konstantin Klamka, Patrick Reipschläger, Raimund Dachselt

Implementation of innovative menu concepts with Elasticcon in AR scenarios

Paul Riedel June 16th, 2017 until September 1st, 2017

Supervision: Konstantin Klamka, Patrick Reipschläger, Raimund Dachselt

Acknowledgements

We would like to thank Andreas Peetz for helping us to improve the CHARM handle and Thomas Schwab as well as Paul Riedel for working on the AR menu and gesture control. This work was partly funded by the Centre for Tactile Internet with Human-in-the-Loop (CeTI), a Cluster of Excellence at TU Dresden.